Artificial Intelligence (AI) is being used more and more in different fields, including education. One such technology is AI language models, such as ChatGPT, which can produce responses that seem human-like. However, their use in GIHE academic assessments is a breach of academic integrity and will lead to the risk of academic misconduct, which is sanctioned according to the GIHE Academic Misconduct Policy.

Challenges to the quality of your work

- AI-generated text is not original, innovative, or critical, and therefore, cannot help you develop creative problem-solving skills or earn high grades that are rewarded by your faculty in your BBA or MSc program.

- Assessed work aims to showcase your acquired knowledge and developed skills, and since AI-generated text cannot demonstrate these, you will not be rewarded if you incorporate it in your assessments.

Challenges to the integrity of your work

- When you submit assessed work, you certify that you are the author of this original work.

- AI-generated text (ChatGPT) or translations (DeepL, Google Translate) is not your original work, so you must use an APA7 in-text citation and reference list entry for AI-generated text, like you would for any other type of source. See this Library page on how to reference AI generated text.

- If you decide to include AI-generated text in assessed work you should include an appendix after your reference list detailing your inputs, the AI tool’s outputs and a brief description of how you have used the AI-generated text in your work.

Guidance

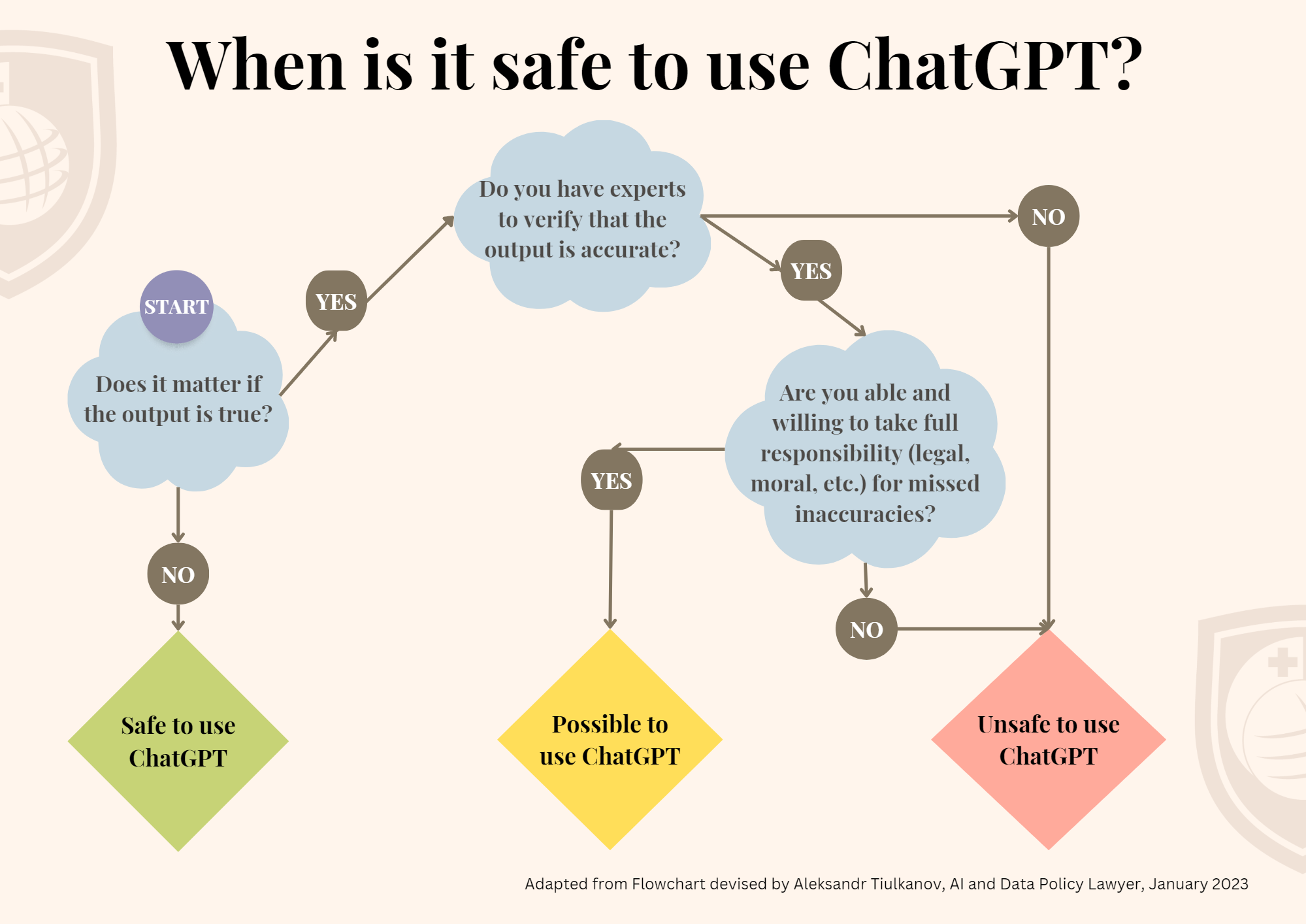

3 simple questions. Here are three straightforward questions to ask yourself to determine if it is safe to use ChatGPT:

Be aware of AI hallucinations. These refer to generated outputs that seem highly plausible but are either factually incorrect or unrelated to the given context due to biases or lack of training data. This results in unreliable or misleading responses (Hillier, 2023). UCL lists the limitations of ChatGPT as follows (UCL, 2023):

- Whilst their output can appear plausible and well written, AI tools frequently get things wrong and can’t be relied upon for factual accuracy.

- They perform better in subjects which are widely written about, and less well in niche or specialist areas.

- Unlike a normal internet search, they don’t look up current resources and are currently some months out of date.

- They cannot currently provide references – they fabricate well formatted but fictitious citations.

- They can perpetuate stereotypes, biases and Western perspectives.

Watch this video on the limitations of ChatGPT:

Always factcheck AI generated outputs. “[AI Large Language Models (LLM)] are essentially machines for matching patterns. Whether the output is ‘true’ is not the point, so long as it matches the pattern.” (Bridle, 2023). Always verify the information against trusted sources, experts in the relevant field, or even basic human intuition to ensure that the information provided is reliable and trustworthy.

Plagiarism. ChatGPT can produce text that is almost identical to existing content, which can lead to plagiarism. Plagiarism means copying someone else’s work and presenting it as your own, which is not only dishonest but also against the GIHE academic integrity policy of our institution and can have serious consequences.

Unfair to yourself. Moreover, relying on ChatGPT to answer questions in assessments is also not fair to yourself because you’re not learning anything new or developing essential skills like critical thinking, problem-solving, and writing skills that are crucial for your academic and your future professional success.

Turnitin AI detector. In April 2023, Turnitin released an AI writing detection tool, which now checks students’ writing against a large database to identify any plagiarism in their work, including the presence of AI-generated text. (Turnitin, 2023).

Learn to use this technology ethically and responsibly. Learn about the risks of poor quality and academic misconduct if you use it to produce assessed work.

Resources:

This is UNESCO’s current position about AI in higher education:

UNESCO. (2023). ChatGPT and artificial intelligence in higher education: Quick start guide. UNESCO. https://www.iesalc.unesco.org/wp-content/uploads/2023/04/ChatGPT-and-Artificial-Intelligence-in-higher-education-Quick-Start-guide_EN_FINAL.pdf

Curious for more information?

Bridle, J. (2023, March 16). The stupidity of AI. The Guardian. https://www.theguardian.com/technology/2023/mar/16/the-stupidity-of-ai-artificial-intelligence-dall-e-chatgpt

Hillier, M. (2023, February 20). Why does ChatGPT generate fake references? TECHE. https://teche.mq.edu.au/2023/02/why-does-chatgpt-generate-fake-references/

The Oxford Review. (2023, March 11). Why ChatGPT does not provide facts: It only looks like it is! [Video]. YouTube. https://youtu.be/77sMlB11uaU

Turnitin. (2023). AI writing detection. Turnitin. https://help.turnitin.com/ai-writing-detection.htm

UCL. (2023, February 22). Engaging with AI in your education and assessment. UCL. https://www.ucl.ac.uk/students/exams-and-assessments/assessment-success-guide/engaging-ai-your-education-and-assessment#Limitations

(Note: This text was originally written by the authors, but refined using feedback and examples provided by ChatGPT)

Great work Caroline – Thanks!